Difference between revisions of "Sight to Sound"

| Line 34: | Line 34: | ||

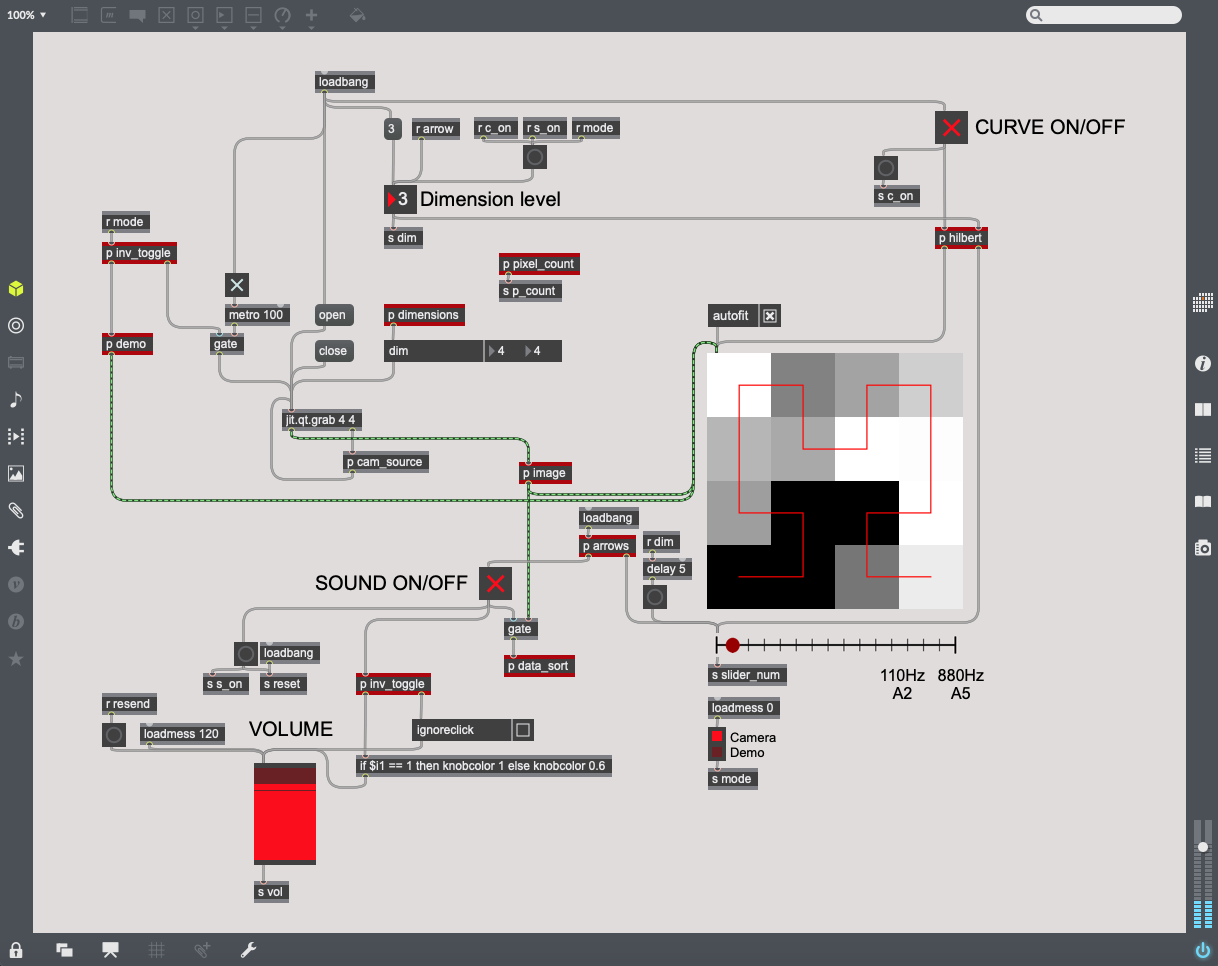

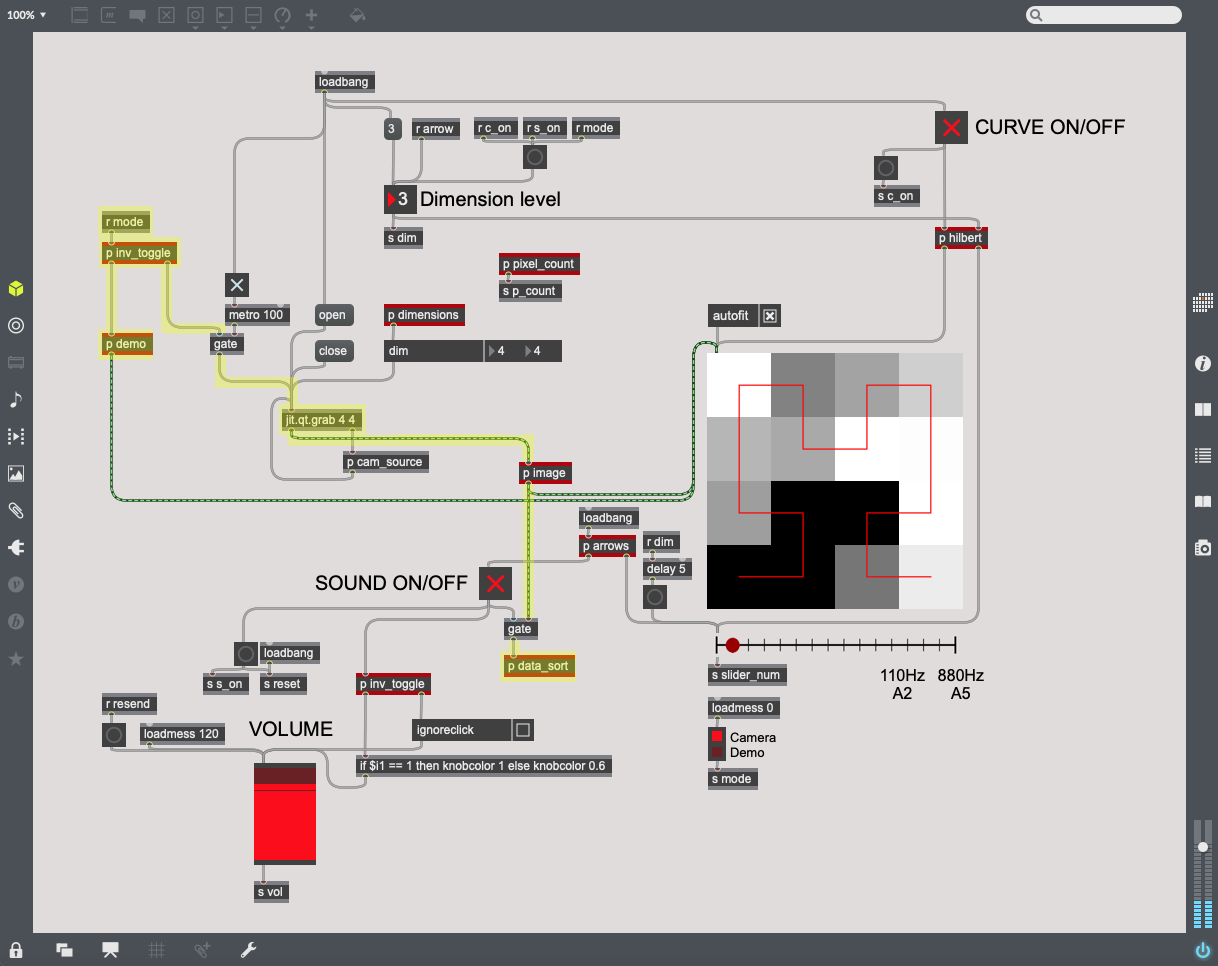

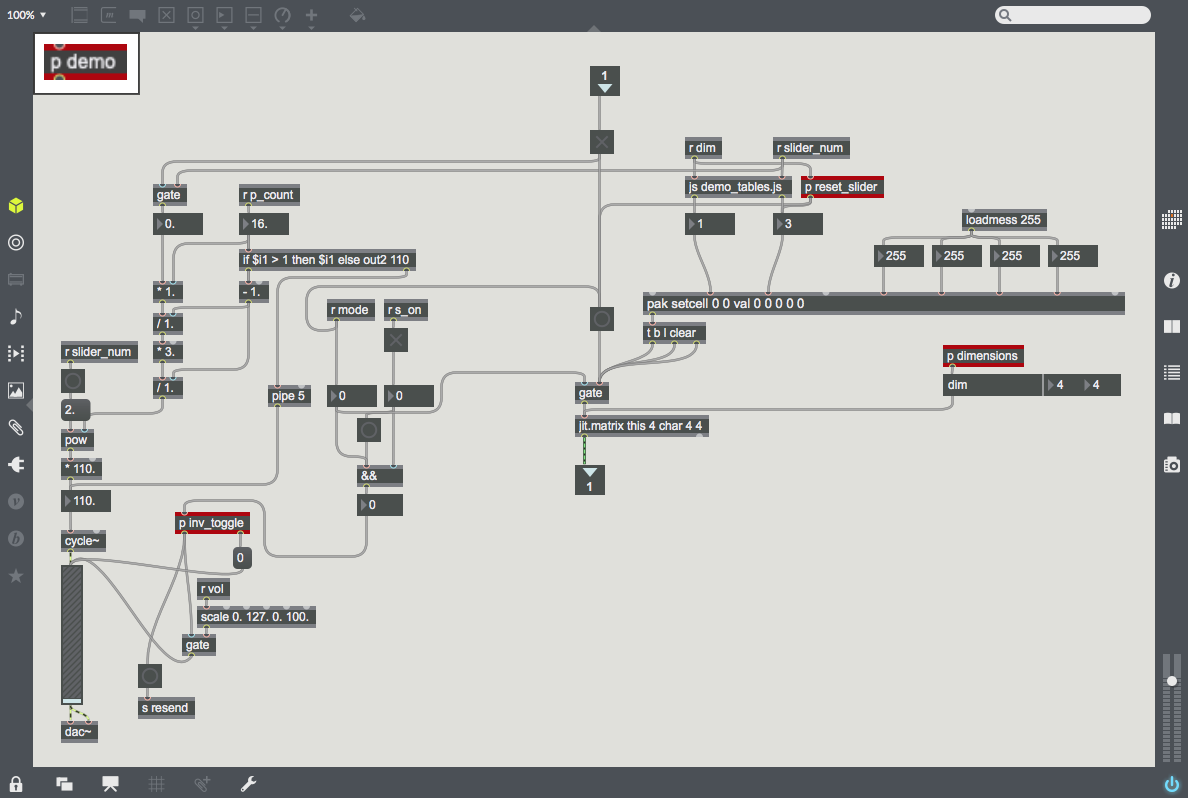

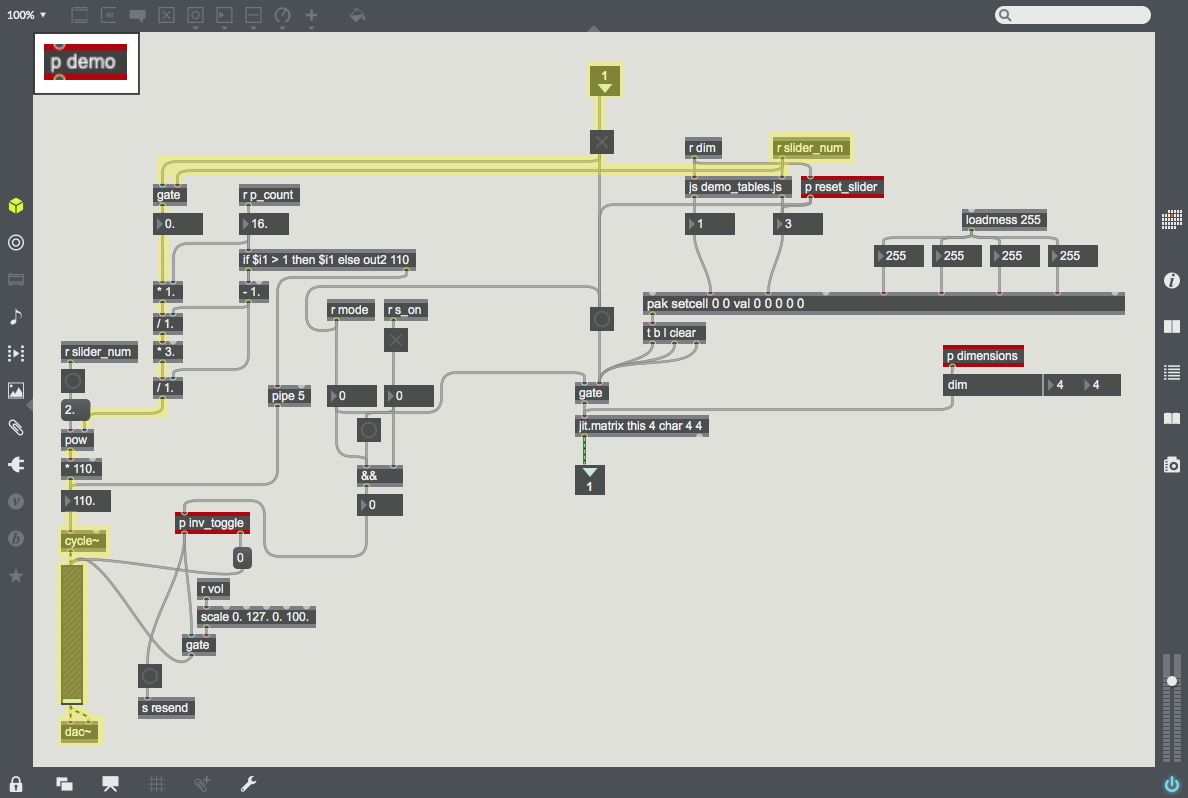

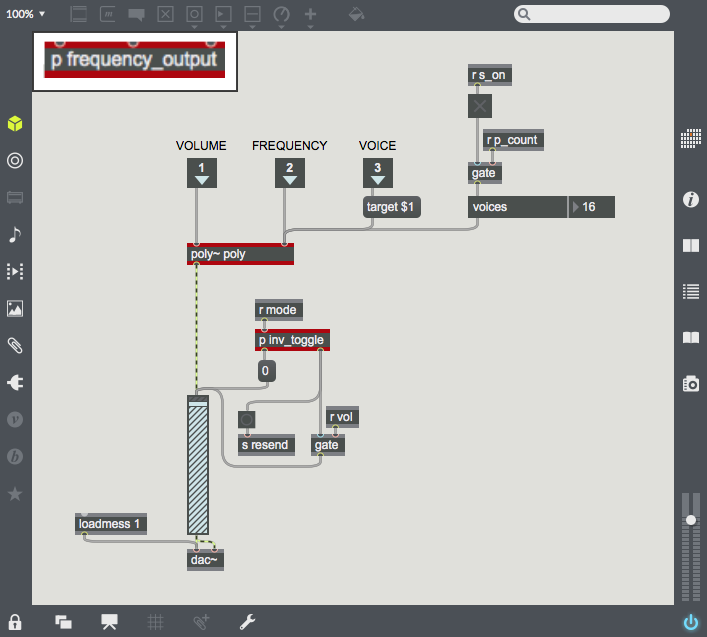

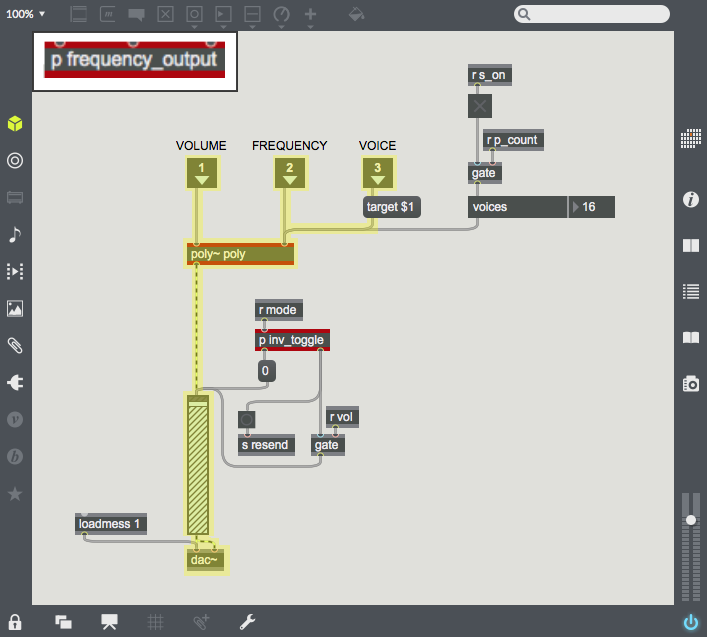

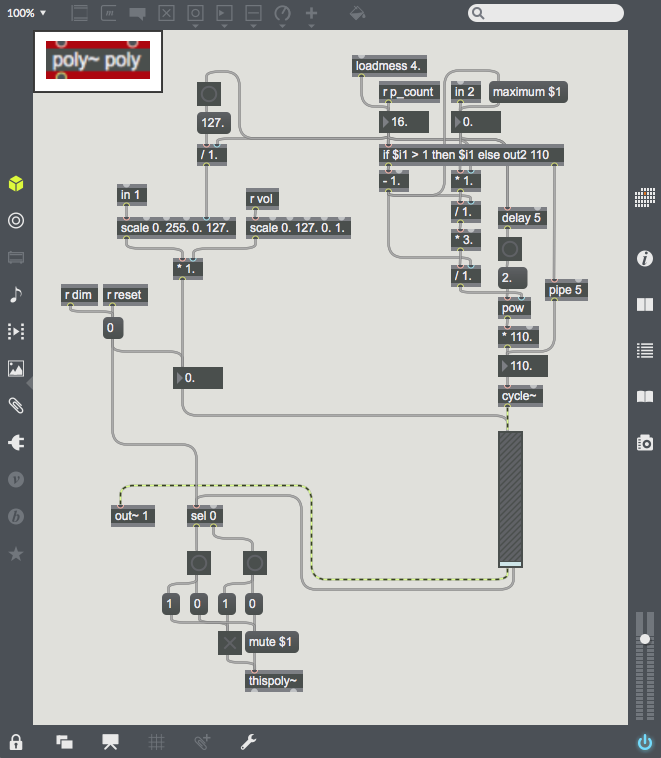

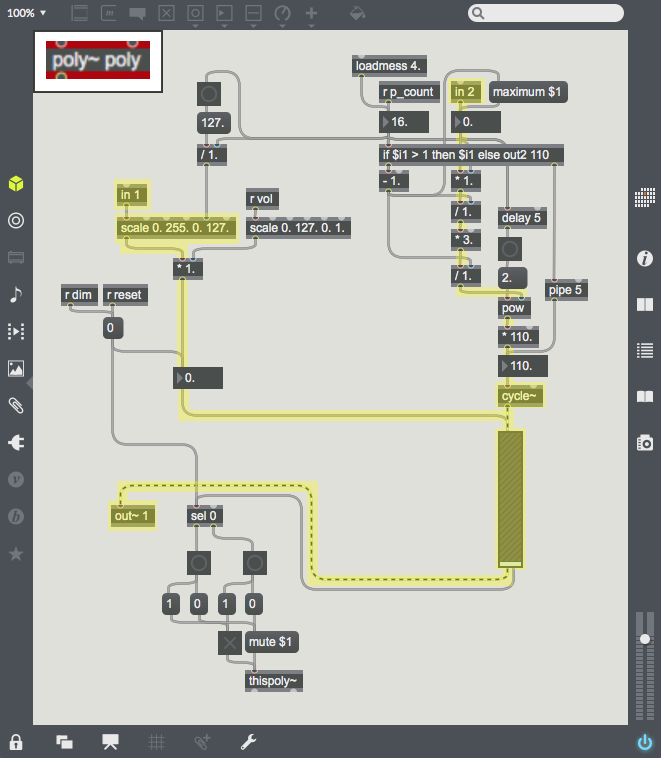

| [[File:Demo_audio.png|200px|thumb|The left half of ''p demo'' uses the place along the Hilbert Curve (which is the slider value) to calculate and output that point's frequency. Then the frequency is sent through ''cycle~'' and ''gain~'', and finally gets outputted through ''dac~''.]] | | [[File:Demo_audio.png|200px|thumb|The left half of ''p demo'' uses the place along the Hilbert Curve (which is the slider value) to calculate and output that point's frequency. Then the frequency is sent through ''cycle~'' and ''gain~'', and finally gets outputted through ''dac~''.]] | ||

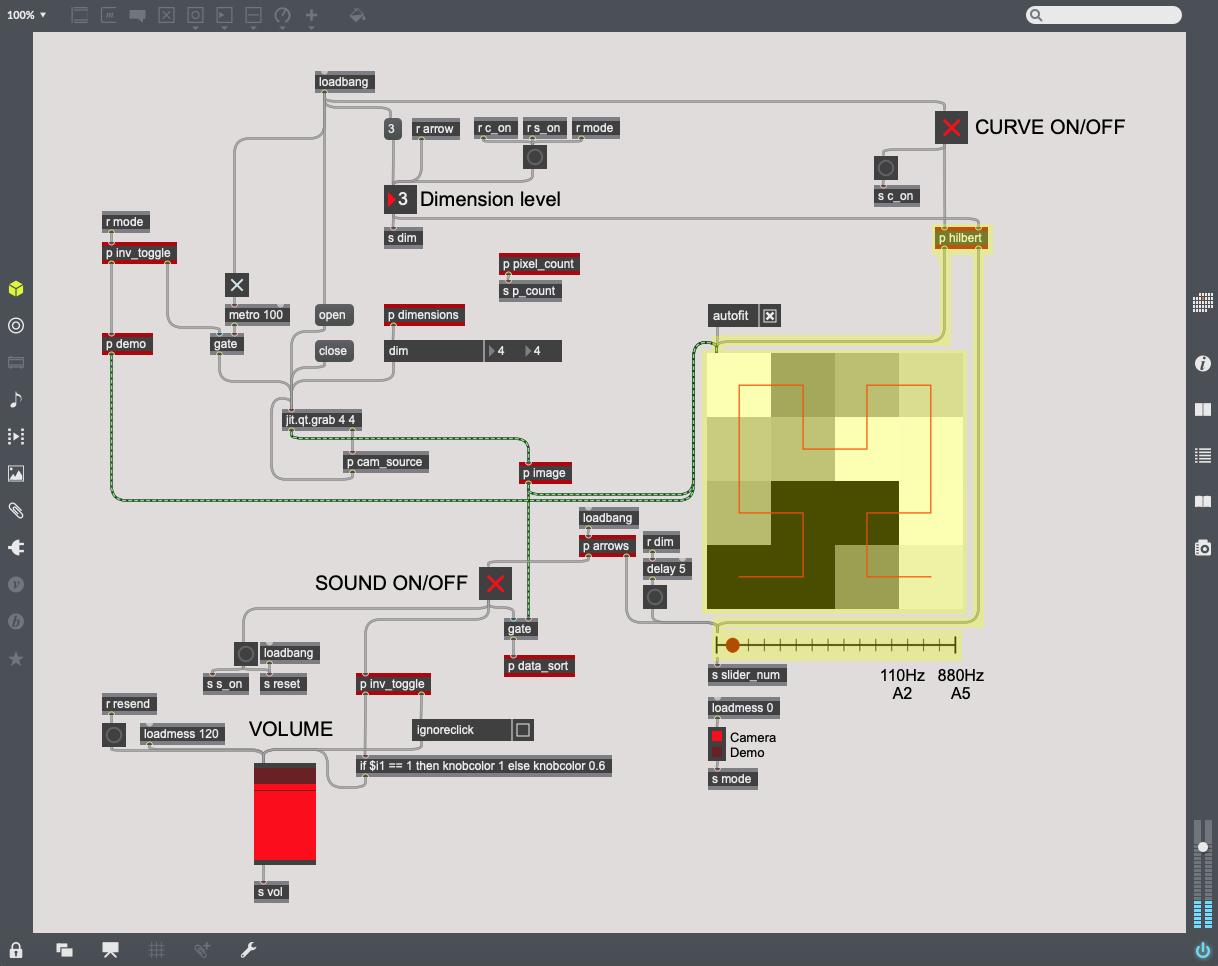

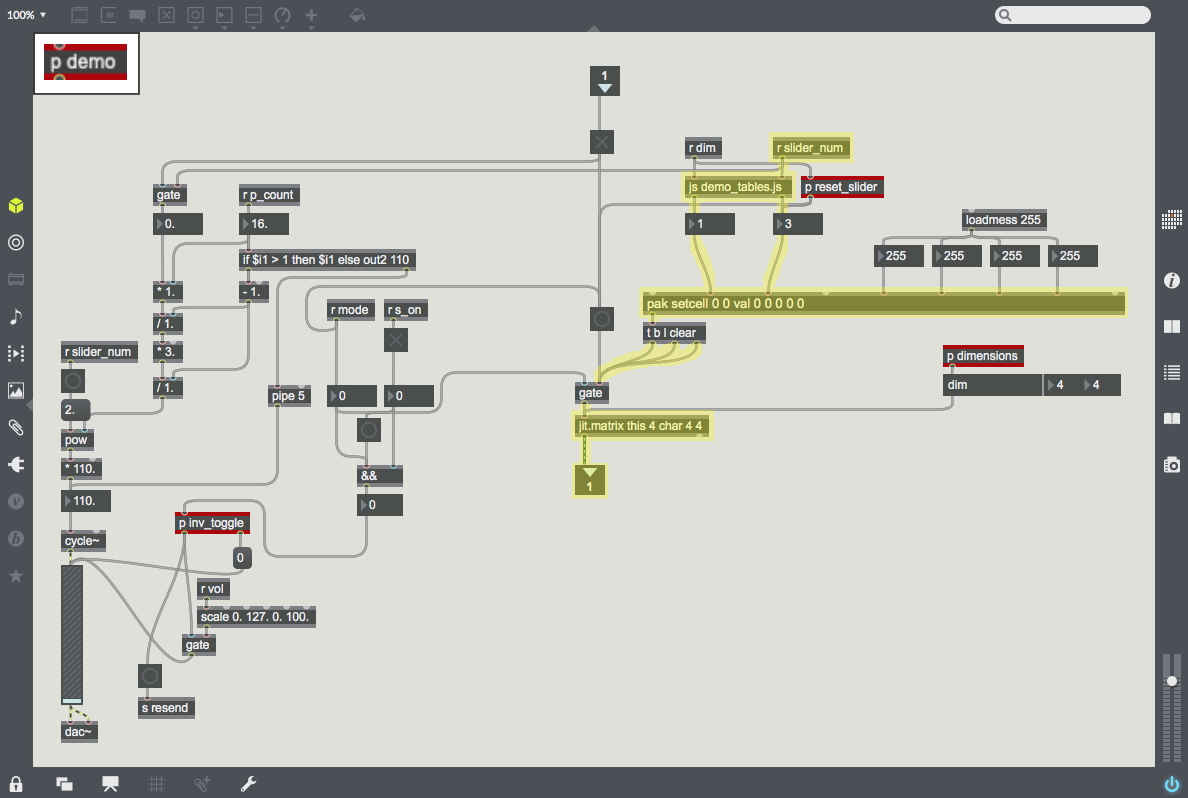

| [[File:Hilbert_to_Point.png|200px|thumb|The right half of ''p demo'' uses javascript to take the place along the Hilbert Curve (which is the slider value) and output the xy-coordinates of the corresponding pixel. Then, it uses ''pak'' and ''jit.matrix'' to generate a matrix of black pixels with a white pixel at that point (x,y).]] | | [[File:Hilbert_to_Point.png|200px|thumb|The right half of ''p demo'' uses javascript to take the place along the Hilbert Curve (which is the slider value) and output the xy-coordinates of the corresponding pixel. Then, it uses ''pak'' and ''jit.matrix'' to generate a matrix of black pixels with a white pixel at that point (x,y).]] | ||

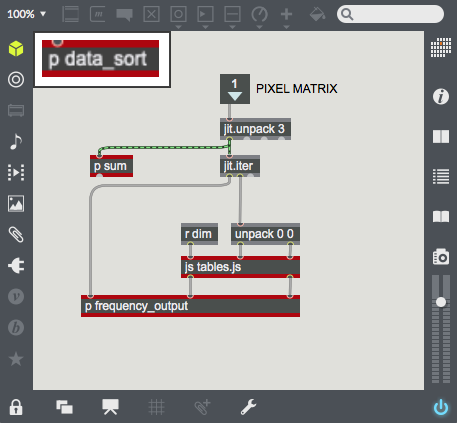

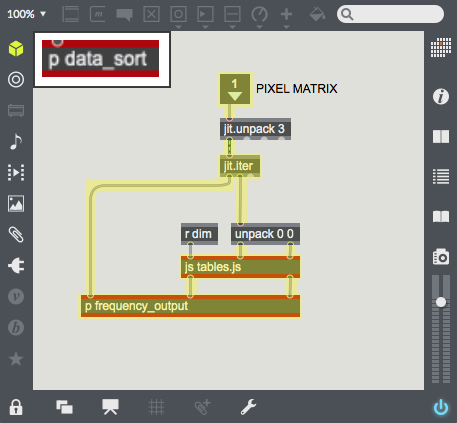

| − | | [[File:Point_to_Hilbert.png|100px|thumb|''jit.iter'' splits the camera data into pixel coordinates and their brightness values. The javascript file takes each pixel's xy-coordinates and outputs that pixel's place on the Hilbert Curve (from first to last, when unraveled).]] | + | | [[File:Point_to_Hilbert.png|100px|thumb|''jit.iter'' splits the camera data into pixel coordinates and their brightness values. The javascript file takes each pixel's xy-coordinates and outputs that pixel's place on the Hilbert Curve (from first to last, when unraveled). These values are sent into the subpatcher below.]] |

| [[File:img_spacer.png|50px|frameless|]] | | [[File:img_spacer.png|50px|frameless|]] | ||

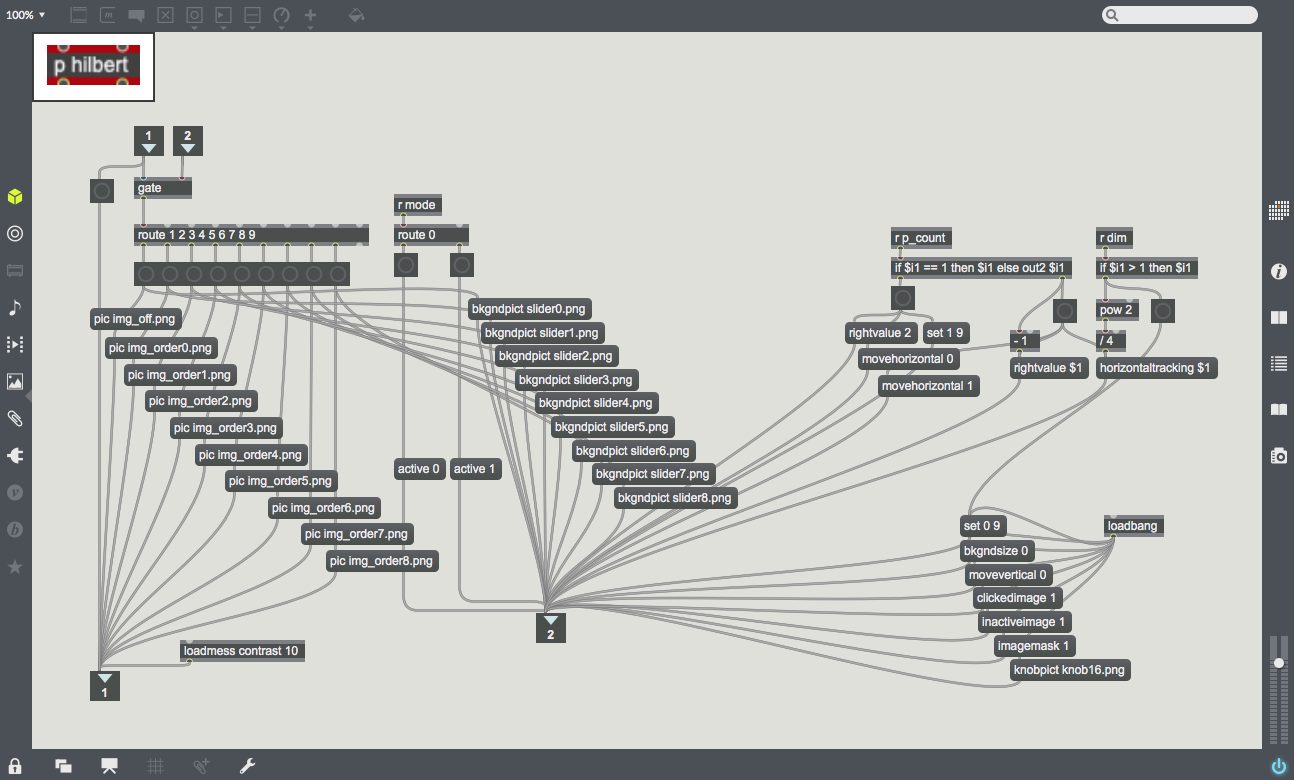

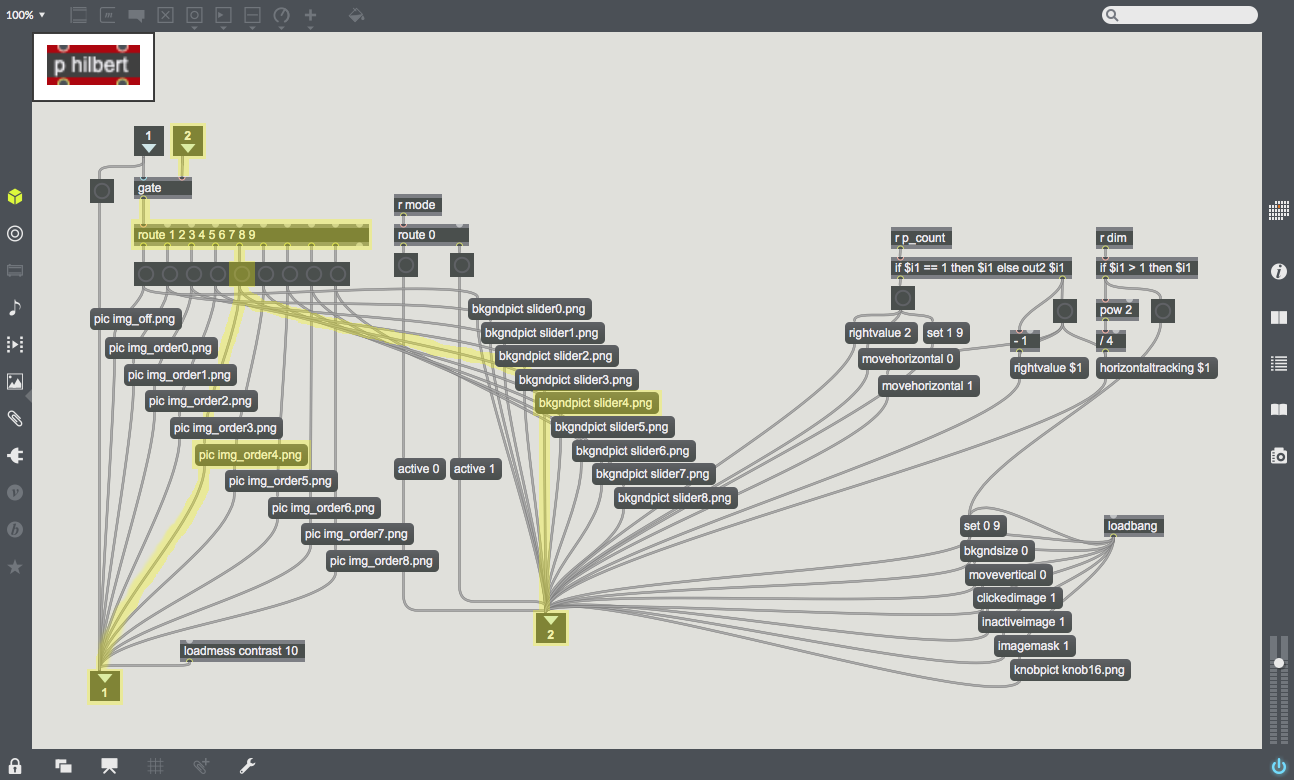

| [[File:Image_selection.png|300px|thumb|With the dimension level as an input, it gets routed and sent to a corresponding Hilbert Curve (''pic'') and slider (''bkgndpict''). On the far right are parameters for the slider, which is an implementation of the object ''pictslider''.]] | | [[File:Image_selection.png|300px|thumb|With the dimension level as an input, it gets routed and sent to a corresponding Hilbert Curve (''pic'') and slider (''bkgndpict''). On the far right are parameters for the slider, which is an implementation of the object ''pictslider''.]] | ||

Revision as of 00:32, 10 October 2019

Sight to Sound

This is a sight-to-sound application; something that takes a camera input and outputs a spectrum of audio frequencies. The creative task is to choose a mapping from 2D pixel-space to 1D frequency-space in a way that could be meaningful to the listener. Of course, it would take someone a long time to relearn their sight through sound, but the purpose of this project is just to implement the software.

Used here, the mapping from pixels to frequencies is the Hilbert Curve. This particular mapping is desirable for two reasons: first, when the camera dimensions increase, points on the curve approach more precise locations, tending toward a specific point. So increasing the dimensions makes better approximations of the camera data, which becomes "higher resolution sound" in terms of audio-sight. Second, the Hilbert Curve maintains that nearby pixels in pixel-space are assigned frequencies near each other in frequency-space. By leveraging these two intuitions of sight, the Hilbert curve is an excellent choice for the mapping for this hypothetical software.

The video below demonstrates the concept in Max. For a better understanding, check out this video by YouTube animator 3Blue1Brown: link